Home Assistant Git Exporter

This commit is contained in:

@@ -22,6 +22,10 @@ HA Add-ons by alexbelgium:

|

||||

maintainer: sguernion

|

||||

slug: 3bff5a27

|

||||

source: https://github.com/rfxcom2mqtt/hassio-addons

|

||||

'Home Assistant Add-on: Zigbee2MQTT':

|

||||

maintainer: Koen Kanters <koenkanters94@gmail.com>

|

||||

slug: 45df7312

|

||||

source: https://github.com/zigbee2mqtt/hassio-zigbee2mqtt

|

||||

Home Assistant Community Add-ons:

|

||||

maintainer: Franck Nijhof <frenck@addons.community>

|

||||

slug: a0d7b954

|

||||

@@ -34,6 +38,10 @@ JDeath Addons:

|

||||

maintainer: jdeath

|

||||

slug: 2effc9b9

|

||||

source: https://github.com/jdeath/homeassistant-addons

|

||||

Music Assistant:

|

||||

maintainer: Music Assistant <marcelveldt@users.noreply.github.com>

|

||||

slug: d5369777

|

||||

source: https://github.com/music-assistant/home-assistant-addon

|

||||

NSPanel Manager:

|

||||

maintainer: NSPanel Manager Team <info@nspanelmanager.com>

|

||||

slug: a5d2b728

|

||||

|

||||

+1

-1

@@ -1 +1 @@

|

||||

2024.5.5

|

||||

2024.8.0

|

||||

@@ -0,0 +1,222 @@

|

||||

[

|

||||

{

|

||||

"name": " 2 flutes",

|

||||

"id": "767de33c845f4167acc9f1dd523ee3ac",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Torsades panzani",

|

||||

"id": "d3e86608a82349d297d7cb5058c500e7",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Essui tout",

|

||||

"id": "0d317248afe54b6d8c4572775360a3b4",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "2 Choco",

|

||||

"id": "0057ba61739a45fa91d31eb84ef7056e",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Dates",

|

||||

"id": "5b702fc3a47244c7bfce29e27eb6b19f",

|

||||

"complete": false

|

||||

},

|

||||

{

|

||||

"name": "Bananes",

|

||||

"id": "6aa090cb21b141f8a2b37386e5896711",

|

||||

"complete": false

|

||||

},

|

||||

{

|

||||

"name": "Abricot",

|

||||

"id": "f7c78e2d17f446e0a7c862e70328254e",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Beurre",

|

||||

"id": "8cb3d58cd54f460c99a1ed2b265a3784",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "creme dessert",

|

||||

"id": "c56d75c68e48429593ee586c98c1823a",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Petit pois",

|

||||

"id": "63e69a4131424922adf82dcde7199d01",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Jus de fruit",

|

||||

"id": "12f80d83e3ee401ea708b75a662b7088",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "allumettes",

|

||||

"id": "de19c1fac9ce47b3a256fad7038f56e2",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "cafe",

|

||||

"id": "75e54ab01fd7493eaeeace72262db613",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "sucre",

|

||||

"id": "0dbdec86fa474f9e8b6e61917a3b811c",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "lait",

|

||||

"id": "ca9ce2289dca47a382c084f4a4a27637",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Creme vanilles",

|

||||

"id": "14062f6ec2744523a03151b6d1ce3ff8",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Peches",

|

||||

"id": "21d7b3d751aa4f6f9a00d9fe2bfb08b1",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Lave vaisselle",

|

||||

"id": "c26afbde9f984049adb00c4b85ebf9ae",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Sirop citron",

|

||||

"id": "a3399494241f4f6696a3ec8469f9c179",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Choco",

|

||||

"id": "a74201251281498384db6944ff27ce59",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Lait",

|

||||

"id": "78dfd45120b04d798ac63020635b096c",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Bleu",

|

||||

"id": "6124a137218348009e641c02c7d36d9d",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Blanc",

|

||||

"id": "2e603522f7dd4d50a31af1acd5986c89",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Vinaigre blanc",

|

||||

"id": "a4f1a2acde9e4cf29804036b353d8a45",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Grain jaune mouche",

|

||||

"id": "164ff5e89df14b13bc1e9fc4eccbf859",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "eponge",

|

||||

"id": "7bdaf185b84d408ca1bc510e3f184c8b",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Fromage blanc",

|

||||

"id": "7eec2511dacc4cd2bc41057ccc46b70c",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "fromage bleu",

|

||||

"id": "f1cbdd4436d648e7b7634a7cf5c2796b",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Sauce salade",

|

||||

"id": "9ed3ece90d494813acf6326c8c05a150",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Carrote",

|

||||

"id": "f0f98a87cddc4dea88d9057ad6f59e59",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "oignon jaune",

|

||||

"id": "85d93f5718e64a9ebc68ddb485eeff70",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Carotte surgelé ",

|

||||

"id": "f8fd9329ee304364a427aa5d05c5ba44",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Bieres",

|

||||

"id": "1f02899f840e4c179c7e3cee11ca182c",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Yaourt nature",

|

||||

"id": "8e967d12cebd474bb642f65bc6eafa8a",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Iles flottantes",

|

||||

"id": "6b3cff6926c14915b9bb1496c2d49a3e",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Aliment poule",

|

||||

"id": "941e3e0206e04e34924ec1a0bde9a46b",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Cassis",

|

||||

"id": "71f61483307245e9a05c4085daeff9ab",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Riz",

|

||||

"id": "a1640819666a404db09009d32494d5e3",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "pernod",

|

||||

"id": "01d581130273469f90a36cb18d5f23a1",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Citron",

|

||||

"id": "49cff244225c4390896454e3b8e96f77",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Gateaux",

|

||||

"id": "6c61d1dd626543e99cf1c4b069ca76a1",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Riz sachet",

|

||||

"id": "6c3a91f180f8404bb6e88b942170d33e",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Cafe",

|

||||

"id": "c86f4ee4837044a298b0299240bde9bf",

|

||||

"complete": true

|

||||

},

|

||||

{

|

||||

"name": "Tomates grappes",

|

||||

"id": "5f4420e6430a456db1180ba262a26bd8",

|

||||

"complete": false

|

||||

}

|

||||

]

|

||||

@@ -0,0 +1,67 @@

|

||||

db_url: mysql://homeassistant:homeassistant@core-mariadb/homeassistant?charset=utf8mb4

|

||||

purge_keep_days: 30

|

||||

auto_purge: true

|

||||

auto_repack: true

|

||||

commit_interval: 5

|

||||

include:

|

||||

domains:

|

||||

- light

|

||||

- switch

|

||||

- cover

|

||||

#entity_globs:

|

||||

# - sensor*

|

||||

entities:

|

||||

- sensor.tac2100_compteur_puissance_active

|

||||

- sensor.tac2100_compteur_courant

|

||||

- sensor.ecowitt_tempin

|

||||

- sensor.select_sql_query

|

||||

- sensor.ecu_current_power

|

||||

- sensor.tac2100_solar_puissance_active

|

||||

- sensor.disjoncteur_domo_z_power

|

||||

- sensor.tac2100_compteur_energie_active_totale

|

||||

- sensor.ecu_today_energy

|

||||

- sensor.ecowitt_temp

|

||||

- sensor.ecowitt_dewpoint

|

||||

- sensor.ecowitt_humidity

|

||||

- sensor.ecowitt_humidityin

|

||||

- sensor.ecowitt_baromabs

|

||||

- sensor.ecowitt_dailyrain

|

||||

- sensor.ecowitt_eventrain

|

||||

- sensor.ecowitt_hourlyrain

|

||||

- sensor.ecowitt_monthlyrain

|

||||

- sensor.ecowitt_rainrate

|

||||

- sensor.ecowitt_solarradiation

|

||||

- sensor.ecowitt_totalrain

|

||||

- sensor.ecowitt_windgust

|

||||

- sensor.ecowitt_baromrel

|

||||

- sensor.ecowitt_uv

|

||||

- sensor.presence_cuisine_motion_state

|

||||

- sensor.ecowitt_winddir

|

||||

- sensor.ecowitt_maxdailygust

|

||||

- sensor.ecowitt_windspeed

|

||||

- sensor.ecowitt_frostpoint

|

||||

- sensor.ecowitt_feelslike

|

||||

- sensor.qualite_air_co2

|

||||

- sensor.geiger_wemos_geiger

|

||||

- sensor.froling_s3_tdeg_fumee

|

||||

- sensor.froling_s3_tdeg_board

|

||||

- sensor.froling_s3_tdeg_depart_chauffage

|

||||

- sensor.froling_s3_tdeg_chaudiere

|

||||

- sensor.esp8266_tampon_temp_4d756f_temp_retour_chauff

|

||||

- sensor.froling_s3_tampon_haut

|

||||

- sensor.froling_s3_tampon_bas

|

||||

- sensor.esp8266_tampon_temp_4d756f_tampon_milieu

|

||||

- sensor.river2_battery_level

|

||||

- sensor.tac2100_compteur_tension

|

||||

- sensor.energy_pj1203_solar_power_b

|

||||

- sensor.energy_pj1203_solar_power_a

|

||||

- sensor.energy_pj1203_solar_current_b

|

||||

- sensor.energy_pj1203_solar_current_a

|

||||

- sensor.energy_pj1203_solar_energy_flow_a

|

||||

- sensor.energy_pj1203_solar_energy_flow_b

|

||||

- sensor.energy_pj1203_solar_energy_produced_a

|

||||

- sensor.energy_pj1203_solar_energy_produced_b

|

||||

- sensor.ecu_current_power

|

||||

- sensor.dell_5520_battery_dell5520

|

||||

- sensor.blitzortung_lightning_counter

|

||||

- sensor.compteur_eclair_mensuel

|

||||

@@ -1,42 +0,0 @@

|

||||

db_url: mysql://homeassistant:homeassistant@core-mariadb/homeassistant?charset=utf8mb4

|

||||

purge_keep_days: 30

|

||||

auto_purge: true

|

||||

include:

|

||||

domains:

|

||||

- climate

|

||||

- binary_sensor

|

||||

- input_boolean

|

||||

- input_datetime

|

||||

- input_number

|

||||

- input_select

|

||||

- sensor

|

||||

- switch

|

||||

- person

|

||||

- device_tracker

|

||||

- light

|

||||

exclude:

|

||||

domains:

|

||||

- camera

|

||||

- zone

|

||||

- automation

|

||||

- sun

|

||||

- weather

|

||||

- cover

|

||||

- group

|

||||

- script

|

||||

- pool_pump

|

||||

entity_globs:

|

||||

- sensor.clock*

|

||||

- sensor.date*

|

||||

- sensor.glances*

|

||||

- sensor.load_*m

|

||||

- sensor.time*

|

||||

- sensor.uptime*

|

||||

- device_tracker.nmap_tracker*

|

||||

entities:

|

||||

- camera.front_door

|

||||

- sensor.memory_free

|

||||

- sensor.memory_use

|

||||

- sensor.memory_use_percent

|

||||

- sensor.processor_use

|

||||

- weather.openweathermap

|

||||

@@ -1,10 +1,10 @@

|

||||

- platform: systemmonitor

|

||||

resources:

|

||||

- type: processor_use

|

||||

# - type: processor_temperature

|

||||

- type: memory_free

|

||||

- type: disk_use_percent

|

||||

- type: disk_use

|

||||

- type: disk_free

|

||||

- type: load_5m

|

||||

#- platform: systemmonitor

|

||||

#resources:

|

||||

# - type: processor_use

|

||||

# - type: processor_temperature

|

||||

# - type: memory_free

|

||||

# - type: disk_use_percent

|

||||

# - type: disk_use

|

||||

# - type: disk_free

|

||||

# - type: load_5m

|

||||

|

||||

|

||||

@@ -1,17 +1,17 @@

|

||||

# http://10.0.0.2:8123/hacs/repository/643579135

|

||||

|

||||

algorithm:

|

||||

initial_temp: 1000

|

||||

min_temp: 0.1

|

||||

cooling_factor: 0.95

|

||||

max_iteration_number: 1000

|

||||

devices:

|

||||

- name: "prise_ecran"

|

||||

entity_id: "switch.prise_ecran"

|

||||

power_max: 400

|

||||

check_usable_template: "{{%if states('sensor.energy_pj1203_energy_flow_b') == 'producing' %}}"

|

||||

duration_min: 6

|

||||

duration_stop_min: 3

|

||||

action_mode: "service_call"

|

||||

service_activation: switch/turn_on"

|

||||

deactivation_service: "switch/turn_off"

|

||||

# algorithm:

|

||||

# initial_temp: 1000

|

||||

# min_temp: 0.1

|

||||

# cooling_factor: 0.95

|

||||

# max_iteration_number: 1000

|

||||

# devices:

|

||||

# - name: "prise_ecran"

|

||||

# entity_id: "switch.prise_ecran"

|

||||

# power_max: 400

|

||||

# check_usable_template: "{{%if states('sensor.energy_pj1203_energy_flow_b') == 'producing' %}}"

|

||||

# duration_min: 6

|

||||

# duration_stop_min: 3

|

||||

# action_mode: "service_call"

|

||||

# service_activation: switch/turn_on"

|

||||

# deactivation_service: "switch/turn_off"

|

||||

|

||||

+381

-390

File diff suppressed because it is too large

Load Diff

@@ -0,0 +1,88 @@

|

||||

blueprint:

|

||||

name: On-Off schedule with state persistence

|

||||

description: '# On-Off schedule with state persistence

|

||||

|

||||

|

||||

A simple on-off schedule, with the addition of state persistence across disruptive

|

||||

events, making sure the target device is always in the expected state.

|

||||

|

||||

|

||||

📕 Full documentation regarding this blueprint is available [here](https://epmatt.github.io/awesome-ha-blueprints/docs/blueprints/automation/on_off_schedule_state_persistence).

|

||||

|

||||

|

||||

🚀 This blueprint is part of the **[Awesome HA Blueprints](https://epmatt.github.io/awesome-ha-blueprints)

|

||||

project**.

|

||||

|

||||

|

||||

ℹ️ Version 2021.10.26

|

||||

|

||||

'

|

||||

source_url: https://github.com/EPMatt/awesome-ha-blueprints/blob/main/blueprints/automation/on_off_schedule_state_persistence/on_off_schedule_state_persistence.yaml

|

||||

domain: automation

|

||||

input:

|

||||

automation_target:

|

||||

name: (Required) Automation target

|

||||

description: The target which the automation will turn on and off based on the

|

||||

provided schedule.

|

||||

selector:

|

||||

target: {}

|

||||

on_time:

|

||||

name: (Required) On Time

|

||||

description: Time when the target should be placed in the on state.

|

||||

selector:

|

||||

time: {}

|

||||

off_time:

|

||||

name: (Required) Off Time

|

||||

description: Time when the target should be placed in the off state.

|

||||

selector:

|

||||

time: {}

|

||||

custom_trigger_event:

|

||||

name: (Optional) Custom Trigger Event

|

||||

description: A custom event which can trigger the state check (eg. a powercut

|

||||

event reported by external integrations).

|

||||

default: ''

|

||||

selector:

|

||||

text: {}

|

||||

trigger_at_homeassistant_startup:

|

||||

name: (Optional) Trigger at Home Assistant startup

|

||||

description: Trigger the target state check and enforcement at Home Assistant

|

||||

startup.

|

||||

default: false

|

||||

selector:

|

||||

boolean: {}

|

||||

variables:

|

||||

off_time: !input 'off_time'

|

||||

on_time: !input 'on_time'

|

||||

trigger_at_homeassistant_startup: !input 'trigger_at_homeassistant_startup'

|

||||

time_fmt: '%H:%M:%S'

|

||||

first_event: '{{ on_time if strptime(on_time,time_fmt).time() < strptime(off_time,time_fmt).time()

|

||||

else off_time }}'

|

||||

second_event: '{{ on_time if strptime(on_time,time_fmt).time() >= strptime(off_time,time_fmt).time()

|

||||

else off_time }}'

|

||||

mode: single

|

||||

max_exceeded: silent

|

||||

trigger:

|

||||

- platform: time

|

||||

at:

|

||||

- !input 'on_time'

|

||||

- !input 'off_time'

|

||||

- platform: homeassistant

|

||||

event: start

|

||||

- platform: event

|

||||

event_type: !input 'custom_trigger_event'

|

||||

condition:

|

||||

- condition: template

|

||||

value_template: '{{ trigger.platform!="homeassistant" or trigger_at_homeassistant_startup

|

||||

}}'

|

||||

action:

|

||||

- choose:

|

||||

- conditions:

|

||||

- condition: template

|

||||

value_template: '{{ now().time() >= strptime(first_event,time_fmt).time() and

|

||||

now().time() < strptime(second_event,time_fmt).time() }}'

|

||||

sequence:

|

||||

- service: homeassistant.{{ "turn_on" if first_event == on_time else "turn_off"}}

|

||||

target: !input 'automation_target'

|

||||

default:

|

||||

- service: homeassistant.{{ "turn_on" if second_event == on_time else "turn_off"}}

|

||||

target: !input 'automation_target'

|

||||

@@ -0,0 +1,334 @@

|

||||

blueprint:

|

||||

name: Dim lights based on sun elevation

|

||||

description: Adjust brightness of lights based on the current sun elevation. If

|

||||

force debug is enabled, you need to execute this automation manually or let Home

|

||||

Assitant restart before the change take effect.

|

||||

source_url: https://github.com/EvTheFuture/homeassistant-blueprints/blob/master/blueprints/dim_lights_based_on_sun_elevation.yaml

|

||||

domain: automation

|

||||

input:

|

||||

target_lights:

|

||||

name: Lights

|

||||

description: The lights to control the brightness of

|

||||

selector:

|

||||

target:

|

||||

entity:

|

||||

domain: light

|

||||

max_brightness:

|

||||

name: Maximum brightness percent

|

||||

description: Brightness to set as the maximum brightness

|

||||

default: 100

|

||||

selector:

|

||||

number:

|

||||

min: 2.0

|

||||

max: 100.0

|

||||

unit_of_measurement: '%'

|

||||

mode: slider

|

||||

step: 1.0

|

||||

min_brightness:

|

||||

name: Minimum brightnes percent

|

||||

description: Brightness to set as the minimum brightness

|

||||

default: 1

|

||||

selector:

|

||||

number:

|

||||

min: 1.0

|

||||

max: 99.0

|

||||

unit_of_measurement: '%'

|

||||

mode: slider

|

||||

step: 1.0

|

||||

reverse:

|

||||

name: Reverse brightness

|

||||

description: If checked, light will start dim when sun starts to set (start

|

||||

elevation value) and will be at full brightness when the elevation has reached

|

||||

the end elevation value.

|

||||

default: false

|

||||

selector:

|

||||

boolean: {}

|

||||

allowance:

|

||||

name: Change Allowance

|

||||

description: How much can the brightnes be changed without this automation stop

|

||||

updating the brightness. If set to 0% this automation will stop update the

|

||||

brightness if the brightness has been changed at all since the last triggering

|

||||

of this automation. If set to 100% this automation will keep on and update

|

||||

the brightness even if you have manually changed the brightness to any other

|

||||

value since the last trigger.

|

||||

default: 0

|

||||

selector:

|

||||

number:

|

||||

min: 0.0

|

||||

max: 100.0

|

||||

unit_of_measurement: '%'

|

||||

mode: slider

|

||||

step: 1.0

|

||||

turn_on:

|

||||

name: Turn on lights automatically

|

||||

description: Turn on lights when sun is setting.

|

||||

default: false

|

||||

selector:

|

||||

boolean: {}

|

||||

start_elevation_setting:

|

||||

name: Elevation of the sun to start dim the light when the sun is setting

|

||||

default: 0

|

||||

selector:

|

||||

number:

|

||||

min: -60.0

|

||||

max: 60.0

|

||||

unit_of_measurement: °

|

||||

mode: slider

|

||||

step: 0.5

|

||||

end_elevation_setting:

|

||||

name: Elevation of the sun when the light shall be fully dimmed when the sun

|

||||

is setting

|

||||

default: -30

|

||||

selector:

|

||||

number:

|

||||

min: -60.0

|

||||

max: 60.0

|

||||

unit_of_measurement: °

|

||||

mode: slider

|

||||

step: 0.5

|

||||

turn_off:

|

||||

name: Turn off lights automatically

|

||||

description: Turn off lights when sun has risen.

|

||||

default: false

|

||||

selector:

|

||||

boolean: {}

|

||||

start_elevation_rising:

|

||||

name: Elevation of the sun to start brighten the light when the sun is rising

|

||||

default: -8

|

||||

selector:

|

||||

number:

|

||||

min: -60.0

|

||||

max: 60.0

|

||||

unit_of_measurement: °

|

||||

mode: slider

|

||||

step: 0.5

|

||||

end_elevation_rising:

|

||||

name: Elevation of the sun when the light shall have max brightness when the

|

||||

sun is rising

|

||||

default: 6

|

||||

selector:

|

||||

number:

|

||||

min: -60.0

|

||||

max: 60.0

|

||||

unit_of_measurement: °

|

||||

mode: slider

|

||||

step: 0.5

|

||||

transition_time:

|

||||

name: Transition time in seconds between brightness values

|

||||

default: 0

|

||||

selector:

|

||||

number:

|

||||

min: 0.0

|

||||

max: 5.0

|

||||

unit_of_measurement: s

|

||||

mode: slider

|

||||

step: 0.25

|

||||

debugging:

|

||||

name: Debug logging

|

||||

description: 'WARNING: Don''t enable this unless you have activated ''logger''

|

||||

in your configuration.yaml file. Turn on debugging of this automation. In

|

||||

order for this to take effect you need to manually trigger (EXECUTE) this

|

||||

automation or let Home Assistant restart before debug will be turned on/off.'

|

||||

default: false

|

||||

selector:

|

||||

boolean: {}

|

||||

variables:

|

||||

allowance_input: !input 'allowance'

|

||||

allowance_value: '{{ allowance_input|float * 2.54 }}'

|

||||

debugging: !input 'debugging'

|

||||

target_lights: !input 'target_lights'

|

||||

entity_list: "{%- if target_lights.entity_id is string -%}\n {{ [target_lights.entity_id]\

|

||||

\ }}\n{%- else -%}\n {{ target_lights.entity_id }}\n{%- endif -%}"

|

||||

transition_time: !input 'transition_time'

|

||||

turn_on: !input 'turn_on'

|

||||

turn_off: !input 'turn_off'

|

||||

reverse: !input 'reverse'

|

||||

start_setting: !input 'start_elevation_setting'

|

||||

start_rising: !input 'start_elevation_rising'

|

||||

end_setting: !input 'end_elevation_setting'

|

||||

end_rising: !input 'end_elevation_rising'

|

||||

max_brightness_input: !input 'max_brightness'

|

||||

max_brightness: '{{ max_brightness_input|float }}'

|

||||

min_brightness_input: !input 'min_brightness'

|

||||

min_brightness: '{{ min_brightness_input|float }}'

|

||||

trigger_is_event: '{{ trigger is defined and trigger.platform == ''event'' }}'

|

||||

skip_event: '{{ trigger_is_event and trigger.event.data.service_data|length > 1

|

||||

}}'

|

||||

affected_entities: "{%- if skip_event -%}\n {{ [] }}\n{%- elif trigger is not defined\

|

||||

\ or trigger.platform != 'event' or trigger.event.data.service_data is not defined\

|

||||

\ or trigger.event.data.service_data.entity_id is not defined -%}\n {{ entity_list\

|

||||

\ }}\n{%- else -%}\n {%- if trigger.event.data.service_data.entity_id is string\

|

||||

\ -%}\n {%- set eids = [trigger.event.data.service_data.entity_id] -%}\n {%-\

|

||||

\ else -%}\n {%- set eids = trigger.event.data.service_data.entity_id -%}\n\

|

||||

\ {%- endif -%}\n {%- set data = namespace(e=[]) -%}\n {%- for e in eids -%}\n\

|

||||

\ {%- if e in entity_list -%}\n {%- set data.e = data.e + [e] -%}\n \

|

||||

\ {%- endif -%}\n {% endfor %}\n {{ data.e }}\n{%- endif -%}"

|

||||

current_states: "{%- set data = namespace(e=[]) -%} {%- for e in entity_list -%}\n\

|

||||

\ {%- set a = {'entity_id': e, 'state': states(e), 'brightness': state_attr(e,\

|

||||

\ 'brightness')} -%}\n {%- set data.e = data.e + [a] -%}\n{%- endfor -%} {{ data.e\

|

||||

\ }}"

|

||||

error_msg: "{%- if start_setting|float <= end_setting|float -%}\n {{ 'Start elevation\

|

||||

\ must be greater than end evevation when the sun is setting' }}\n{%- elif start_rising|float\

|

||||

\ >= end_rising|float -%}\n {{ 'End elevation must be greater than start evevation\

|

||||

\ when the sun is rising' }}\n{%- elif entity_list|length == 0 -%}\n {{ 'No valid\

|

||||

\ entites specified or found' }}\n{%- endif -%}"

|

||||

has_last: "{% if trigger is defined and trigger.platform == 'state' and trigger.from_state.entity_id\

|

||||

\ == 'sun.sun' -%}\n {{ True }}\n{% else %}\n {{ False }}\n{% endif %}"

|

||||

rising: '{{ state_attr(''sun.sun'', ''rising'') }}'

|

||||

last_rising: '{% if has_last %}{{ trigger.from_state.attributes.rising }}{% else

|

||||

%}{{ rising }}{% endif %}'

|

||||

elevation: '{{ state_attr(''sun.sun'', ''elevation'') }}'

|

||||

last_elevation: '{% if has_last %}{{ trigger.from_state.attributes.elevation }}{%

|

||||

else %}{{ elevation }}{% endif %}'

|

||||

force_turn_on: '{{ turn_on and not rising and last_elevation != "" and last_elevation

|

||||

>= end_setting|float and elevation <= start_setting|float }}'

|

||||

force_turn_off: '{{ turn_off and rising and last_elevation != "" and last_elevation

|

||||

<= end_rising|float and elevation >= end_rising|float }}'

|

||||

max_elevation: '{% if rising %}{{end_rising|float}}{% else %}{{start_setting|float}}{%

|

||||

endif %}'

|

||||

min_elevation: '{% if rising %}{{start_rising|float}}{% else %}{{end_setting|float}}{%

|

||||

endif %}'

|

||||

last_max_elevation: '{% if last_rising %}{{end_rising|float}}{% else %}{{start_setting|float}}{%

|

||||

endif %}'

|

||||

last_min_elevation: '{% if last_rising %}{{start_rising|float}}{% else %}{{end_setting|float}}{%

|

||||

endif %}'

|

||||

elevation_range: '{{ max_elevation - min_elevation }}'

|

||||

last_elevation_range: '{{ last_max_elevation - last_min_elevation }}'

|

||||

brightness_range: '{{ max_brightness - min_brightness }}'

|

||||

delta_to_min: '{{ elevation - min_elevation }}'

|

||||

last_delta_to_min: '{{ last_elevation|float - last_min_elevation }}'

|

||||

full_percent_raw: '{% if delta_to_min / elevation_range < 0 %}0{% elif delta_to_min

|

||||

/ elevation_range > 1 %}1{% else %}{{delta_to_min / elevation_range}}{% endif

|

||||

%}'

|

||||

full_percent: '{% if reverse %}{{1 - full_percent_raw}}{% else %}{{full_percent_raw}}{%

|

||||

endif %}'

|

||||

last_full_percent_raw: '{% if last_delta_to_min / elevation_range < 0 %}0{% elif

|

||||

last_delta_to_min / elevation_range > 1 %}1{% else %}{{last_delta_to_min / elevation_range}}{%

|

||||

endif %}'

|

||||

last_full_percent: '{% if reverse %}{{1 - last_full_percent_raw}}{% else %}{{last_full_percent_raw}}{%

|

||||

endif %}'

|

||||

brightness_pct: '{{ full_percent * brightness_range + min_brightness }}'

|

||||

last_brightness_pct: '{{ last_full_percent * brightness_range + min_brightness }}'

|

||||

brightness: '{{ (brightness_pct * 2.54)|int }}'

|

||||

last_brightness: '{{ (last_brightness_pct * 2.54)|int }}'

|

||||

turn_on_entities: "{%- if force_turn_on -%}\n {%- set data = namespace(entities=[])\

|

||||

\ -%}\n {%- for e in entity_list -%}\n {%- if not state_attr(e, 'supported_features')|bitwise_and(1)\

|

||||

\ -%}\n {%- set data.entities = data.entities + [e] -%}\n {%- endif -%}\n\

|

||||

\ {%- endfor -%}\n {{ data.entities }}\n{%- else -%}\n {{ [] }}\n{%- endif\

|

||||

\ -%}"

|

||||

dim_entities: "{%- set data = namespace(entities=[]) -%} {%- for e in entity_list\

|

||||

\ -%}\n {%- set current_brightness = state_attr(e, 'brightness') -%}\n {%- set\

|

||||

\ is_on = states(e) == 'on' -%}\n {%- set last_changed = (now() - states[e].last_changed)\

|

||||

\ -%}\n {%- set can_dim = state_attr(e, 'supported_features')|bitwise_and(1)|bitwise_or(not\

|

||||

\ is_on) -%}\n {#\n Set brightness and turn on if\n * Trigger is an event\

|

||||

\ to turn on entity and it is currently off\n OR\n * dimming is supported\

|

||||

\ by the entity AND light shall be turned on because the sun is setting (force_turn_on)\n\

|

||||

\ OR\n * dimming is supported by the entity AND light is ON AND the current\

|

||||

\ brightness differ from the new brightness\n AND\n * current brightness\

|

||||

\ is equal to last set brightness (has not been changed by the user within the\

|

||||

\ allowance)\n #}\n {%- if e in affected_entities -%}\n {%- if trigger_is_event\

|

||||

\ and (not is_on or (is_on and last_changed.seconds < 2)) -%}\n {%- set data.entities\

|

||||

\ = data.entities + [e] -%}\n {%- elif can_dim and force_turn_on -%}\n \

|

||||

\ {%- set data.entities = data.entities + [e] -%}\n {%- elif can_dim and is_on\

|

||||

\ and current_brightness != brightness and (current_brightness - last_brightness)|abs\

|

||||

\ <= allowance_value -%}\n {%- set data.entities = data.entities + [e] -%}\n\

|

||||

\ {%- endif -%}\n {%- endif -%}\n{%- endfor -%} {{ data.entities }}"

|

||||

turn_off_entities: "{%- if force_turn_off -%}\n {{ entity_list }}\n{%- else -%}\n\

|

||||

\ {{ [] }}\n{%- endif -%}"

|

||||

trigger:

|

||||

- platform: state

|

||||

entity_id: sun.sun

|

||||

attribute: elevation

|

||||

- platform: event

|

||||

event_type: call_service

|

||||

event_data:

|

||||

domain: light

|

||||

service: turn_on

|

||||

- platform: homeassistant

|

||||

event: start

|

||||

mode: queued

|

||||

action:

|

||||

- choose:

|

||||

- conditions:

|

||||

- condition: template

|

||||

value_template: '{{ debugging and trigger is not defined }}'

|

||||

sequence:

|

||||

- service: logger.set_level

|

||||

data:

|

||||

homeassistant.components.blueprint.dim_lights_based_on_sun_elevation: DEBUG

|

||||

- conditions:

|

||||

- condition: template

|

||||

value_template: '{{ debugging and trigger.platform == ''homeassistant'' and

|

||||

trigger.event == ''start'' }}'

|

||||

sequence:

|

||||

- service: logger.set_level

|

||||

data:

|

||||

homeassistant.components.blueprint.dim_lights_based_on_sun_elevation: DEBUG

|

||||

default:

|

||||

- choose:

|

||||

- conditions:

|

||||

- condition: template

|

||||

value_template: '{{ error_msg|length }}'

|

||||

sequence:

|

||||

- service: system_log.write

|

||||

data:

|

||||

level: error

|

||||

logger: homeassistant.components.blueprint.dim_lights_based_on_sun_elevation

|

||||

message: '{{ error_msg }}'

|

||||

default:

|

||||

- choose:

|

||||

- conditions:

|

||||

- condition: template

|

||||

value_template: '{{ debugging }}'

|

||||

sequence:

|

||||

- service: system_log.write

|

||||

data:

|

||||

level: debug

|

||||

logger: homeassistant.components.blueprint.dim_lights_based_on_sun_elevation

|

||||

message: " DEBUG:\n skip_event: {{ skip_event }}\n allowance_value: {{\

|

||||

\ allowance_value }}\n affected_entities: {{ affected_entities }}\n\n\

|

||||

\ elevation: {{ elevation }} ({% if rising %}{{ start_rising ~ ', '\

|

||||

\ ~ end_rising }}{% else %}{{ start_setting ~ ', ' ~ end_setting }}{%\

|

||||

\ endif %})\n {% if last_elevation != \"\" -%}last elevation: {{ last_elevation\

|

||||

\ }}\n{% endif %} new brightness: {{ brightness }}\n {% if last_elevation\

|

||||

\ != \"\" -%}last brightness: {{ last_brightness }}\n{% endif %} \n\

|

||||

\ current_states: {{ current_states }}\n \n force_turn_on: {{ force_turn_on\

|

||||

\ }}\n force_turn_off: {{ force_turn_off }}\n \n entities: {{ entity_list\

|

||||

\ }}\n \n turn_on_entities: {{ turn_on_entities }}\n \n dim_entities:\

|

||||

\ {{ dim_entities }}\n \n turn_off_entities: {{ turn_off_entities }}\n\

|

||||

\ \n {% if trigger is defined %}Triggered by: {{ trigger.platform }}\n\

|

||||

{% endif %} {% if trigger is defined and trigger.platform == 'state'\

|

||||

\ and trigger.from_state.entity_id == 'sun.sun' -%} from: (elevation:\

|

||||

\ {{ trigger.from_state.attributes.elevation }}, azimuth: {{ trigger.from_state.attributes.azimuth\

|

||||

\ }})\n to: (elevation: {{ trigger.to_state.attributes.elevation }},\

|

||||

\ azimuth: {{ trigger.to_state.attributes.azimuth }})\n {% endif %}\

|

||||

\ {% if trigger is defined and trigger.platform == 'event' -%} entity_id:\

|

||||

\ {{ trigger.event.data.service_data.entity_id }}\n service_data_length:\

|

||||

\ {{ trigger.event.data.service_data|length }}\n complete event data:\

|

||||

\ {{ trigger.event.data }}\n {% endif %} "

|

||||

default: []

|

||||

- choose:

|

||||

- conditions:

|

||||

- condition: template

|

||||

value_template: '{{ not skip_event and turn_off_entities|length > 0 }}'

|

||||

sequence:

|

||||

- service: light.turn_off

|

||||

data:

|

||||

entity_id: '{{ turn_off_entities }}'

|

||||

- conditions:

|

||||

- condition: template

|

||||

value_template: '{{ not skip_event and turn_on_entities|length > 0 }}'

|

||||

sequence:

|

||||

- service: light.turn_on

|

||||

data:

|

||||

entity_id: '{{ turn_on_entities }}'

|

||||

- conditions:

|

||||

- condition: template

|

||||

value_template: '{{ not skip_event and dim_entities|length > 0 }}'

|

||||

sequence:

|

||||

- service: light.turn_on

|

||||

data:

|

||||

entity_id: '{{ dim_entities }}'

|

||||

brightness: '{{ brightness }}'

|

||||

transition: '{{ transition_time }}'

|

||||

default: []

|

||||

@@ -0,0 +1,51 @@

|

||||

blueprint:

|

||||

name: Cover - Immediate conditions

|

||||

description: 'Version: 1.0.1'

|

||||

domain: automation

|

||||

input:

|

||||

entities_condition:

|

||||

name: Immediate conditions

|

||||

description: Select all entities that match your immediate conditions

|

||||

selector:

|

||||

entity:

|

||||

multiple: true

|

||||

timer:

|

||||

name: Timer

|

||||

description: Timer used for remaining suspension time

|

||||

selector:

|

||||

entity:

|

||||

domain: timer

|

||||

multiple: false

|

||||

position:

|

||||

name: Desired roller shutter position

|

||||

selector:

|

||||

entity:

|

||||

domain: input_number

|

||||

multiple: false

|

||||

automation:

|

||||

name: Roller shutter positioning

|

||||

description: Automation containing roller shutter positioning rules

|

||||

selector:

|

||||

entity:

|

||||

domain: automation

|

||||

multiple: false

|

||||

source_url: https://github.com/FabienYt/home-assistant/blob/main/blueprints/automation/FabienYt/cover_immediate.yaml

|

||||

mode: restart

|

||||

max_exceeded: silent

|

||||

trigger:

|

||||

- platform: state

|

||||

entity_id: !input entities_condition

|

||||

action:

|

||||

- service: input_number.set_value

|

||||

target:

|

||||

entity_id: !input position

|

||||

data:

|

||||

value: -1

|

||||

- service: timer.cancel

|

||||

target:

|

||||

entity_id: !input timer

|

||||

- service: automation.trigger

|

||||

data:

|

||||

skip_condition: true

|

||||

target:

|

||||

entity_id: !input automation

|

||||

@@ -0,0 +1,137 @@

|

||||

blueprint:

|

||||

name: HASPone activates a selected page after a specified period of inactivity

|

||||

description: '

|

||||

|

||||

## Blueprint Version: `1.05.00`

|

||||

|

||||

|

||||

# Description

|

||||

|

||||

|

||||

Activates a selected page after a specified period of inactivity.

|

||||

|

||||

|

||||

## HASPone Page and Button Reference

|

||||

|

||||

|

||||

The images below show each available HASPone page along with the layout of available

|

||||

button objects.

|

||||

|

||||

|

||||

<details>

|

||||

|

||||

|

||||

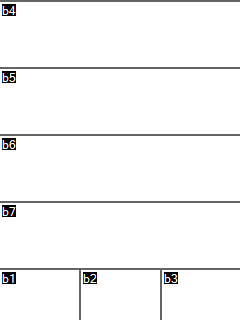

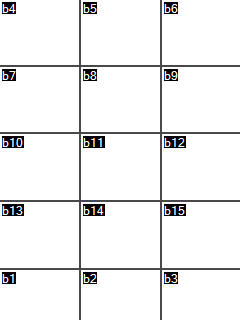

| Page 0 | Pages 1-3 | Pages 4-5 |

|

||||

|

||||

|--------|-----------|-----------|

|

||||

|

||||

|

|

||||

|

|

||||

|

|

||||

|

|

||||

|

||||

|

||||

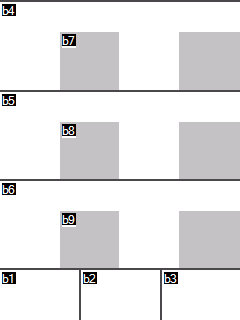

| Page 6 | Page 7 | Page 8 |

|

||||

|

||||

|--------|--------|--------|

|

||||

|

||||

|

|

||||

|

|

||||

|

|

||||

|

|

||||

|

||||

|

||||

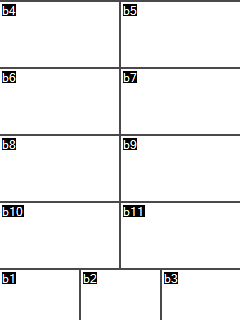

| Page 9 | Page 10 | Page 11 |

|

||||

|

||||

|--------|---------|---------|

|

||||

|

||||

|

|

||||

|

|

||||

|

|

||||

|

||||

|

||||

</details>

|

||||

|

||||

'

|

||||

domain: automation

|

||||

input:

|

||||

haspdevice:

|

||||

name: HASPone Device

|

||||

description: Select the HASPone device

|

||||

selector:

|

||||

device:

|

||||

integration: mqtt

|

||||

manufacturer: HASwitchPlate

|

||||

model: HASPone v1.0.0

|

||||

multiple: false

|

||||

targetpage:

|

||||

name: Page to activate

|

||||

description: Select a destination page for this button to activate.

|

||||

default: 1

|

||||

selector:

|

||||

number:

|

||||

min: 1.0

|

||||

max: 11.0

|

||||

mode: slider

|

||||

unit_of_measurement: page

|

||||

step: 1.0

|

||||

idletime:

|

||||

name: Idle Time

|

||||

description: Idle time in seconds

|

||||

default: 30

|

||||

selector:

|

||||

number:

|

||||

min: 5.0

|

||||

max: 900.0

|

||||

step: 5.0

|

||||

mode: slider

|

||||

unit_of_measurement: seconds

|

||||

source_url: https://github.com/HASwitchPlate/HASPone/blob/main/Home_Assistant/blueprints/hasp_Activate_Page_on_Idle.yaml

|

||||

mode: restart

|

||||

max_exceeded: silent

|

||||

variables:

|

||||

haspdevice: !input haspdevice

|

||||

haspname: "{%- for entity in device_entities(haspdevice) -%}\n {%- if entity|regex_search(\"^sensor\\..+_sensor(?:_\\d+|)$\")

|

||||

-%}\n {{- entity|regex_replace(find=\"^sensor\\.\", replace=\"\", ignorecase=true)|regex_replace(find=\"_sensor(?:_\\d+|)$\",

|

||||

replace=\"\", ignorecase=true) -}}\n {%- endif -%}\n{%- endfor -%}"

|

||||

targetpage: !input targetpage

|

||||

idletime: !input idletime

|

||||

pagecommandtopic: '{{ "hasp/" ~ haspname ~ "/command/page" }}'

|

||||

activepage: "{%- set activepage = namespace() -%} {%- for entity in device_entities(haspdevice)

|

||||

-%}\n {%- if entity|regex_search(\"^number\\..*_active_page(?:_\\d+|)$\") -%}\n

|

||||

\ {%- set activepage.entity=entity -%}\n {%- endif -%}\n{%- endfor -%} {{ states(activepage.entity)

|

||||

| int(default=-1) }}"

|

||||

trigger_variables:

|

||||

haspdevice: !input haspdevice

|

||||

haspname: "{%- for entity in device_entities(haspdevice) -%}\n {%- if entity|regex_search(\"^sensor\\..+_sensor(?:_\\d+|)$\")

|

||||

-%}\n {{- entity|regex_replace(find=\"^sensor\\.\", replace=\"\", ignorecase=true)|regex_replace(find=\"_sensor(?:_\\d+|)$\",

|

||||

replace=\"\", ignorecase=true) -}}\n {%- endif -%}\n{%- endfor -%}"

|

||||

haspsensor: "{%- for entity in device_entities(haspdevice) -%}\n {%- if entity|regex_search(\"^sensor\\..+_sensor(?:_\\d+|)$\")

|

||||

-%}\n {{ entity }}\n {%- endif -%}\n{%- endfor -%}"

|

||||

jsontopic: '{{ "hasp/" ~ haspname ~ "/state/json" }}'

|

||||

targetpage: !input targetpage

|

||||

pagejsonpayload: '{"event":"page","value":{{targetpage}}}'

|

||||

trigger:

|

||||

- platform: mqtt

|

||||

topic: '{{jsontopic}}'

|

||||

condition:

|

||||

- condition: template

|

||||

value_template: '{{ is_state(haspsensor, ''ON'') }}'

|

||||

- condition: template

|

||||

value_template: "{{-\n (trigger.payload_json.event is defined)\nand\n (trigger.payload_json.event

|

||||

== 'page')\nand\n (trigger.payload_json.value is defined)\nand\n (trigger.payload_json.value

|

||||

!= targetpage)\n-}}"

|

||||

action:

|

||||

- delay:

|

||||

seconds: '{{idletime|int}}'

|

||||

- condition: template

|

||||

value_template: "{%- set currentpage = namespace() -%} {%- for entity in device_entities(haspdevice)

|

||||

-%}\n {%- if entity|regex_search(\"^number\\..*_active_page(?:_\\d+|)$\") -%}\n

|

||||

\ {%- set currentpage.entity=entity -%}\n {%- endif -%}\n{%- endfor -%} {%-

|

||||

if states(currentpage.entity) == targetpage -%}\n {{false}}\n{%- else -%}\n {{true}}\n{%-

|

||||

endif -%}"

|

||||

- service: mqtt.publish

|

||||

data:

|

||||

topic: '{{pagecommandtopic}}'

|

||||

payload: '{{targetpage}}'

|

||||

retain: true

|

||||

@@ -0,0 +1,544 @@

|

||||

blueprint:

|

||||

name: Aqara Magic Cube

|

||||

description: Control anything using Aqara Magic Cube.

|

||||

domain: automation

|

||||

input:

|

||||

remote:

|

||||

name: Magic Cube

|

||||

description: Select the Aqara Magic Cube device

|

||||

selector:

|

||||

device:

|

||||

integration: zha

|

||||

manufacturer: LUMI

|

||||

flip_90:

|

||||

name: Flip 90 degrees

|

||||

description: 'Actions to run when cube flips 90 degrees.

|

||||

|

||||

This cancels all specific 90 degrees functions.

|

||||

|

||||

e.g From side 1 to side 2 will be the same as from side 6 to side 2'

|

||||

default: false

|

||||

selector:

|

||||

boolean: {}

|

||||

cube_flip_90:

|

||||

name: Flip cube 90 degrees

|

||||

description: Action to run when cube flips 90 degrees. This only works if 'Flip

|

||||

90 degrees' is toggled

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

flip_180:

|

||||

name: Flip 180 degrees

|

||||

description: 'Actions to run when cube flips 180 degrees.

|

||||

|

||||

This cancels all specific 180 degrees functions

|

||||

|

||||

e.g From side 1 to side 4 will be the same as from side 5 to side 2'

|

||||

default: false

|

||||

selector:

|

||||

boolean: {}

|

||||

cube_flip_180:

|

||||

name: Flip cube 180 degrees

|

||||

description: Action to run when cube flips 180 degrees. This only works if 'Flip

|

||||

180 degrees' is toggled

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

slide_any_side:

|

||||

name: Slide any side

|

||||

description: 'Actions to run when cube slides on any side.

|

||||

|

||||

This cancels all specific ''slide'' functions

|

||||

|

||||

e.g Slide on side 1 will be the same as slide on side 2'

|

||||

default: false

|

||||

selector:

|

||||

boolean: {}

|

||||

cube_slide_any:

|

||||

name: Slide cube on any side

|

||||

description: Action to run when cube slides on any slide. This only works if

|

||||

'Slide any side' is toggled

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

knock_any_side:

|

||||

name: Knock on any side

|

||||

description: 'Actions to run when knocking cube regardless of the side.

|

||||

|

||||

This cancels all specific ''knock'' functions

|

||||

|

||||

e.g Knock on side 1 will be the same as knocking side 2'

|

||||

default: false

|

||||

selector:

|

||||

boolean: {}

|

||||

cube_knock_any:

|

||||

name: Knock cube on any side

|

||||

description: Action to run when knocking cube on any side. This only works if

|

||||

'Knock on any side' is toggled

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

one_to_two:

|

||||

name: From side 1 to side 2

|

||||

description: Action to run when cube goes from side 1 to side 2

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

one_to_three:

|

||||

name: From side 1 to side 3

|

||||

description: Action to run when cube goes from side 1 to side 3

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

one_to_four:

|

||||

name: From side 1 to side 4

|

||||

description: Action to run when cube goes from side 1 to side 4

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

one_to_five:

|

||||

name: From side 1 to side 5

|

||||

description: Action to run when cube goes from side 1 to side 5

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

one_to_six:

|

||||

name: From side 1 to side 6

|

||||

description: Action to run when cube goes from side 1 to side 6

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

two_to_one:

|

||||

name: From side 2 to side 1

|

||||

description: Action to run when cube goes from side 2 to side 1

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

two_to_three:

|

||||

name: From side 2 to side 3

|

||||

description: Action to run when cube goes from side 2 to side 3

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

two_to_four:

|

||||

name: From side 2 to side 4

|

||||

description: Action to run when cube goes from side 2 to side 4

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

two_to_five:

|

||||

name: From side 2 to side 5

|

||||

description: Action to run when cube goes from side 2 to side 5

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

two_to_six:

|

||||

name: From side 2 to side 6

|

||||

description: Action to run when cube goes from side 2 to side 6

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

three_to_one:

|

||||

name: From side 3 to side 1

|

||||

description: Action to run when cube goes from side 3 to side 1

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

three_to_two:

|

||||

name: From side 3 to side 2

|

||||

description: Action to run when cube goes from side 3 to side 2

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

three_to_four:

|

||||

name: From side 3 to side 4

|

||||

description: Action to run when cube goes from side 3 to side 4

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

three_to_five:

|

||||

name: From side 3 to side 5

|

||||

description: Action to run when cube goes from side 3 to side 5

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

three_to_six:

|

||||

name: From side 3 to side 6

|

||||

description: Action to run when cube goes from side 3 to side 6

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

four_to_one:

|

||||

name: From side 4 to side 1

|

||||

description: Action to run when cube goes from side 4 to side 1

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

four_to_two:

|

||||

name: From side 4 to side 2

|

||||

description: Action to run when cube goes from side 4 to side 2

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

four_to_three:

|

||||

name: From side 4 to side 3

|

||||

description: Action to run when cube goes from side 4 to side 3

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

four_to_five:

|

||||

name: From side 4 to side 5

|

||||

description: Action to run when cube goes from side 4 to side 5

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

four_to_six:

|

||||

name: From side 4 to side 6

|

||||

description: Action to run when cube goes from side 4 to side 6

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

five_to_one:

|

||||

name: From side 5 to side 1

|

||||

description: Action to run when cube goes from side 5 to side 1

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

five_to_two:

|

||||

name: From side 5 to side 2

|

||||

description: Action to run when cube goes from side 5 to side 2

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

five_to_three:

|

||||

name: From side 5 to side 3

|

||||

description: Action to run when cube goes from side 5 to side 3

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

five_to_four:

|

||||

name: From side 5 to side 4

|

||||

description: Action to run when cube goes from side 5 to side 4

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

five_to_six:

|

||||

name: From side 5 to side 6

|

||||

description: Action to run when cube goes from side 5 to side 6

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

six_to_one:

|

||||

name: From side 6 to side 1

|

||||

description: Action to run when cube goes from side 6 to side 1

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

six_to_two:

|

||||

name: From side 6 to side 2

|

||||

description: Action to run when cube goes from side 6 to side 2

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

six_to_three:

|

||||

name: From side 6 to side 3

|

||||

description: Action to run when cube goes from side 6 to side 3

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

six_to_four:

|

||||

name: From side 6 to side 4

|

||||

description: Action to run when cube goes from side 6 to side 4

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

six_to_five:

|

||||

name: From side 6 to side 5

|

||||

description: Action to run when cube goes from side 6 to side 5

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

one_to_one:

|

||||

name: Knock - Side 1

|

||||

description: Action to run when knocking on side 1

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

two_to_two:

|

||||

name: Knock - Side 2

|

||||

description: Action to run when knocking on side 2

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

three_to_three:

|

||||

name: Knock - Side 3

|

||||

description: Action to run when knocking on side 3

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

four_to_four:

|

||||

name: Knock - Side 4

|

||||

description: Action to run when knocking on side 4

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

five_to_five:

|

||||

name: Knock - Side 5

|

||||

description: Action to run when knocking on side 5

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

six_to_six:

|

||||

name: Knock - Side 6

|

||||

description: Action to run when knocking on side 6

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

slide_on_one:

|

||||

name: Slide - Side 1 up

|

||||

description: Action to run when slides with Side 1 up

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

slide_on_two:

|

||||

name: Slide - Side 2 up

|

||||

description: Action to run when slides with Side 2 up

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

slide_on_three:

|

||||

name: Slide - Side 3 up

|

||||

description: Action to run when slides with Side 3 up

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

slide_on_four:

|

||||

name: Slide - Side 4 up

|

||||

description: Action to run when slides with Side 4 up

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

slide_on_five:

|

||||

name: Slide - Side 5 up

|

||||

description: Action to run when slides with Side 5 up

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

slide_on_six:

|

||||

name: Slide - Side 6 up

|

||||

description: Action to run when slides with Side 6 up

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

cube_wake:

|

||||

name: Wake up the cube

|

||||

description: Action to run when cube wakes up

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

cube_drop:

|

||||

name: Cube drops

|

||||

description: Action to run when cube drops

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

cube_shake:

|

||||

name: Shake cube

|

||||

description: Action to run when you shake the cube

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

rotate_right:

|

||||

name: Rotate right

|

||||

description: Action to run when cube rotates right

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

rotate_left:

|

||||

name: Rotate left

|

||||

description: Action to run when cube rotates left

|

||||

default: []

|

||||

selector:

|

||||

action: {}

|

||||

source_url: https://community.home-assistant.io/t/aqara-magic-cube-zha-51-actions/270829

|

||||

mode: restart

|

||||

max_exceeded: silent

|

||||

trigger:

|

||||

- platform: event

|

||||

event_type: zha_event

|

||||

event_data:

|

||||

device_id: !input 'remote'

|

||||

action:

|

||||

- variables:

|

||||

command: '{{ trigger.event.data.command }}'

|

||||

value: '{{ trigger.event.data.args.value }}'

|

||||

flip_degrees: '{{ trigger.event.data.args.flip_degrees }}'

|

||||

relative_degrees: '{{ trigger.event.data.args.relative_degrees }}'

|

||||

flip_90: !input 'flip_90'

|

||||

flip_180: !input 'flip_180'

|

||||

slide_any_side: !input 'slide_any_side'

|

||||

knock_any_side: !input 'knock_any_side'

|

||||

flip90: 64

|

||||

flip180: 128

|

||||

slide: 256

|

||||

knock: 512

|

||||

shake: 0

|

||||

drop: 3

|

||||

activated_face: "\n{% if command == \"slide\" or command == \"knock\" %}\n\n \

|

||||

\ {% if trigger.event.data.args.activated_face == 1 %} 1\n\n {% elif trigger.event.data.args.activated_face\

|

||||

\ == 2 %} 5\n\n {% elif trigger.event.data.args.activated_face == 3 %} 6\n\n\

|

||||

\ {% elif trigger.event.data.args.activated_face == 4 %} 4\n\n {% elif trigger.event.data.args.activated_face\

|

||||

\ == 5 %} 2\n\n {% elif trigger.event.data.args.activated_face == 6 %} 3\n\n\

|

||||

\ {% endif %}\n\n{% elif command == 'flip' %}\n\n {{ trigger.event.data.args.activated_face\

|

||||

\ | int }}\n\n{% endif %}\n"

|

||||

from_face: "\n{% if command == \"flip\" and flip_degrees == 90 %}\n\n {{ ((value\

|

||||

\ - flip90 - (trigger.event.data.args.activated_face - 1)) / 8) + 1 | int }}\n\

|

||||

\n{% endif %}\n"

|

||||

- choose:

|

||||

- conditions:

|

||||

- '{{ command == ''rotate_right'' }}'

|

||||

sequence: !input 'rotate_right'

|

||||

- conditions:

|

||||

- '{{ command == ''rotate_left'' }}'

|

||||

sequence: !input 'rotate_left'

|

||||

- conditions:

|

||||

- '{{ command == ''checkin'' }}'

|

||||

sequence: !input 'cube_wake'

|

||||

- conditions:

|

||||

- '{{ value == shake }}'

|

||||

sequence: !input 'cube_shake'

|

||||

- conditions:

|

||||

- '{{ value == drop }}'

|

||||

sequence: !input 'cube_drop'

|

||||

- conditions:

|

||||

- '{{ command == ''knock'' and knock_any_side }}'

|

||||

sequence: !input 'cube_knock_any'

|

||||

- conditions:

|

||||

- '{{ command == ''slide'' and slide_any_side }}'

|

||||

sequence: !input 'cube_slide_any'

|

||||

- conditions:

|

||||

- '{{ flip_degrees == 90 and flip_90 }}'

|

||||

sequence: !input 'cube_flip_90'

|

||||

- conditions:

|

||||

- '{{ flip_degrees == 180 and flip_180 }}'

|

||||

sequence: !input 'cube_flip_180'

|

||||

- conditions:

|

||||

- '{{ flip_degrees == 90 and activated_face == 1 }}'

|

||||

sequence:

|

||||

- choose:

|

||||

- conditions: '{{ from_face == 2 }}'

|

||||

sequence: !input 'two_to_one'

|

||||

- conditions: '{{ from_face == 3 }}'

|

||||

sequence: !input 'three_to_one'

|

||||

- conditions: '{{ from_face == 5 }}'

|

||||

sequence: !input 'five_to_one'

|

||||

- conditions: '{{ from_face == 6 }}'

|

||||

sequence: !input 'six_to_one'

|

||||

- conditions:

|

||||

- '{{ flip_degrees == 90 and activated_face == 2 }}'

|